PROPHET FORECAST

From data to decision — forecasting for a seasonal restaurant

As a restaurant manager, it's easy to dig into the POS to get a portrait of last season, but putting that data side by side with forward-looking patterns to build an accurate prediction is a completely different challenge. For a small seasonal restaurant, that upgrade in accuracy can make a real difference: better staffing decisions, smarter ordering, fewer surprises. The model was not built for perfect accuracy, it was built to guide business decisions.

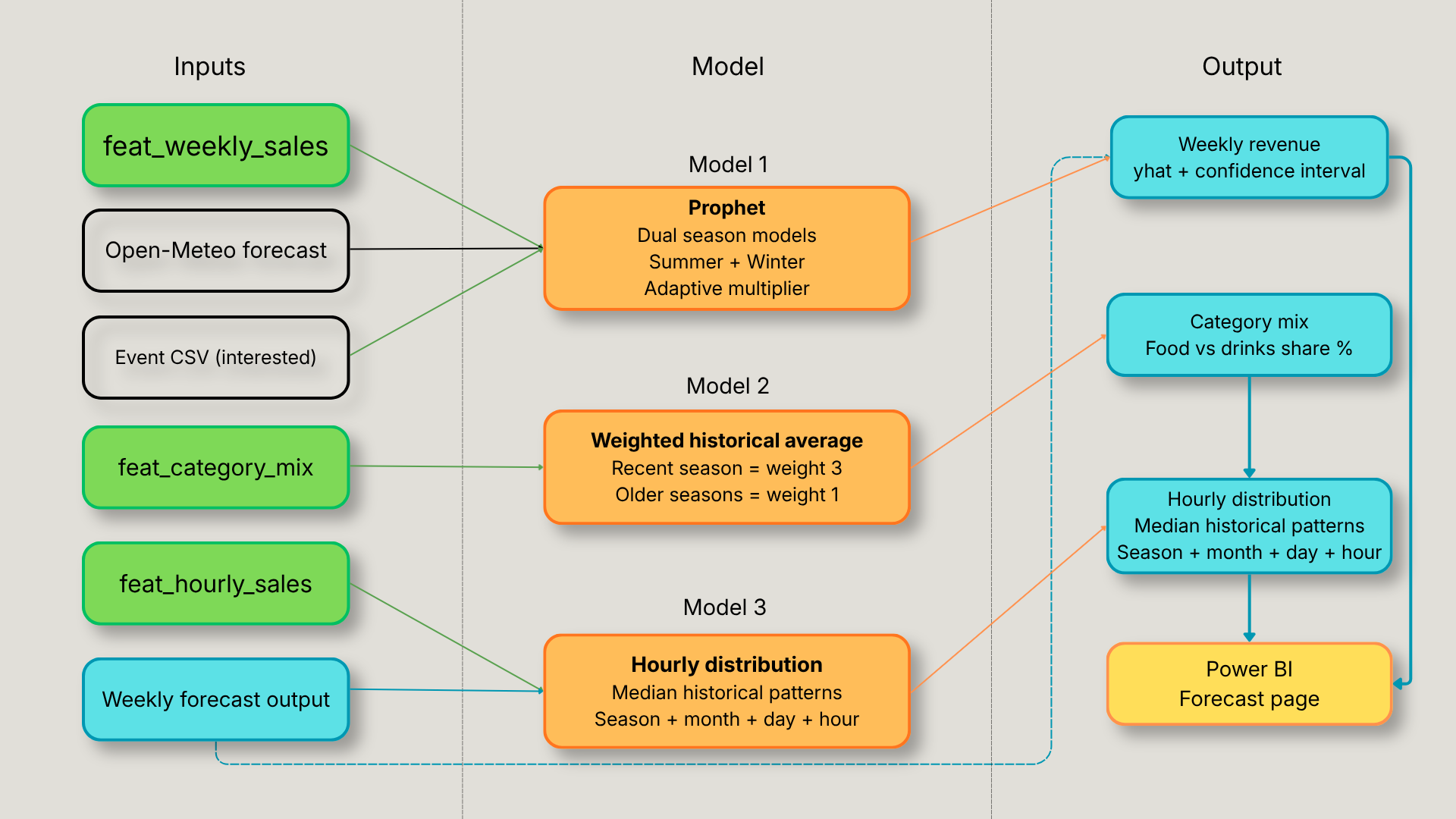

What looks like one forecasting model is actually three, each built differently because each problem required a different approach. Weekly revenue is forecasted with Facebook Prophet. Category mix, how much of that revenue will come from food vs drinks, uses a weighted historical average. Hourly distribution breaks the weekly forecast down into days and hours using historical patterns. Each model feeds the next, and all three land in Power BI as a single integrated forecast page.

Why Prophet

For a restaurant with only a few seasons of historical data, Prophet was the right fit. It perform reliably with limited training data, where models like XGBoost would overfit. The seasonal gap between summer and winter operations, the known event spikes, and the external regressors like weather all map naturally onto what Prophet was designed to handle. Confidence intervals come out of the box, which matters for a dashboard where a manager needs to see not just the prediction but the range of realistic outcomes. And as more seasons accumulate, the model is designed to be replaced or upgraded without touching the rest of the pipeline.

Three models, three insights

The forecasting system is built on three separate models, each solving a different problem with a different method. The choice of method for each wasn't arbitrary, it came directly from the constraints of the data and what the business actually needed to know.

Model 1 Weekly revenue forecast

The core of the system. Prophet takes feat_weekly_sales as input and produces a weekly revenue forecast with confidence intervals for the next four weeks. Two separate models are trained, one for summer, one for winter, because the two seasons behave fundamentally differently and a single model trying to learn both produces worse predictions for each. Weather and event data are attached as regressors, giving Prophet context beyond just the revenue history. The forecast for week 1 uses actual Open-Meteo forecast weather. Weeks 2-4 fall back to seasonal historical averages, an honest acknowledgment that weather predictability drops sharply beyond 7 days.

Model 2 Category mix forecast

Prophet was the first instinct for category mix forecasting too, but with 29 categories and only a few seasons of data, it overfit and collapsed to predicting one dominant category. The solution was simpler and more honest: a weighted historical average. The most recent season gets weight 3, the second most recent gets weight 2, and older seasons get weight 1. This ensures the model reflects recent menu changes and customer behavior without pretending it has more data than it does.

Model 3 Hourly distribution

The weekly forecast from Model 1 is a single number, total revenue for the week. That's not enough to plan staffing. Model 3 takes that number and breaks it into days and hours using median historical distributions from feat_hourly_sales. The grain is season + month + day of week + hour, month is included specifically because of the sunset effect discovered in the data: peak service hour shifts from 18h in winter to 19h in summer, with September Saturdays peaking at 42% of revenue at 19h.

Model accuracy — knowing what the numbers mean

Accuracy varies significantly between seasons and between points in the season, understanding why matters as much as the numbers themselves.

Summer:

Weeks 1-8: ~16% MAPE closest to training data, season following historical patterns

Full season: ~20% MAPE

Adaptive multiplier (kicks in at week 8) improved mid-season accuracy from 35.6% → 29.1%

Winter:~40% MAPE shorter, more volatile, fewer data points. The multiplier was tested and made things worse, so winter stays pure Prophet.

These numbers come from a leave-one-season-out backfill: Prophet is trained on all data before each complete historical season and used to predict it, simulating real production performance without hindsight. Results stored in forecast.weekly_sales_backfill, powering the model accuracy page in Power BI.

The wildfire summer

Summer 2023 is excluded from prophet_weekly_sales.py training data, regional wildfires suppressed the July peak entirely, making the revenue shape completely abnormal:

Early-week MAPE before exclusion: 21%

Early-week MAPE after exclusion: 16%

The decision came from a conversation, not the data alone. The data showed an anomaly, the stakeholder explained why. The data is only as good as the context behind it.

Model 2 category mix

prophet_category_mix.py doesn't use Prophet. With 29 categories and only a few seasons, Prophet overfits and collapses to one dominant category. A weighted historical average is more honest at this data scale:

Most recent season: weight 3

Second most recent: weight 2

Older seasons: weight 1

Architecture is ready to switch to XGBoost at 5+ seasons, flag already in config.

The more interesting decision is about event data. For future forecast weeks:

event_went → hardcoded to zero (people click Interested but rarely update to Going in a small market, systematically undercounts)

event_interested → used as the leading indicator of actual demand

One line of code, but it came from understanding how this specific community behaves, not from the data itself.

Model 3 hourly distribution

prophet_hourly_distribution.py takes the weekly Prophet output and breaks it into a full week of hourly revenue predictions. It applies median historical distributions at four levels:

Season

Month added specifically because of the sunset effect discovered in the data

Day of week

Hour

Key finding: peak service hour shifts from 18h in winter to 19h in summer, with September Saturdays peaking at 42% of daily revenue at 19h. Aggregating by season alone would smooth over this entirely.

Median is used throughout rather than average, one event night skews an average significantly. For staffing decisions, typical is more useful than exceptional.

Known limitations

The forecasting system is designed to improve over time, several constraints exist today that will be addressed as more data accumulates:

Limited training data, with only a few complete seasons, all three models are working with a small sample. Predictions improve meaningfully with each additional season.

Weather fallback for weeks 2-4 beyond the 7-day forecast window, weather regressors fall back to seasonal historical averages. A rainy week predicted as average will produce a less accurate forecast.

Category mix is static across the forecast window the weighted average produces the same category split for all four forecast weeks. It doesn't account for weekly variation within a season.

No customer count as documented in the dbt section, customer count cannot be derived reliably from the POS data. The hourly model predicts revenue, not covers, a meaningful difference for kitchen planning.

What's next

The architecture was built with future upgrades in mind:

Rolling window, already configured, activates at 6+ seasons to keep the model focused on recent behavior

XGBoost for category mix flag already in prophet_category_mix.py, switches on at 5+ seasons

Automatic anomaly detection currently anomaly seasons like 2023 are excluded manually after stakeholder conversation. A future version could flag statistical outliers automatically and prompt for confirmation.

Customer count proxy once a reliable estimation method is validated for specific analyses, it could be added as a regressor to improve the hourly distribution model

Full script breakdown and design decisions on GitHub